Context Engineering: The Missing Layer in Agentic Applications

In this post from The Agentic Advantage series, we explore one of the most important — and often overlooked — aspects of building AI agents: Context Engineering.

If you're new to agents, check out the previous post here. in the series to learn the basics of building agents.

Why Context Engineering Matters

Building AI agents has become significantly easier thanks to frameworks, SDKs, and ADKs that abstract much of the complexity involved in creating LLM-powered applications.

However, the hardest part of building reliable agentic systems isn't creating the agent itself — it's managing the context.

Unlike traditional chat applications, many AI agents are built to perform specific tasks. These systems often cannot rely on long conversational exchanges to gradually gather context. Instead, the agent must receive all necessary information at the right moment in order to make the correct decision and complete its task.

As AI systems grow in complexity, context becomes the primary engineering challenge.

As Andrej Karpathy said it, LLMs are like a new kind of operating system. The LLM is like the CPU and its context window is like the RAM, serving as the model's working memory.

What Is Context Engineering?

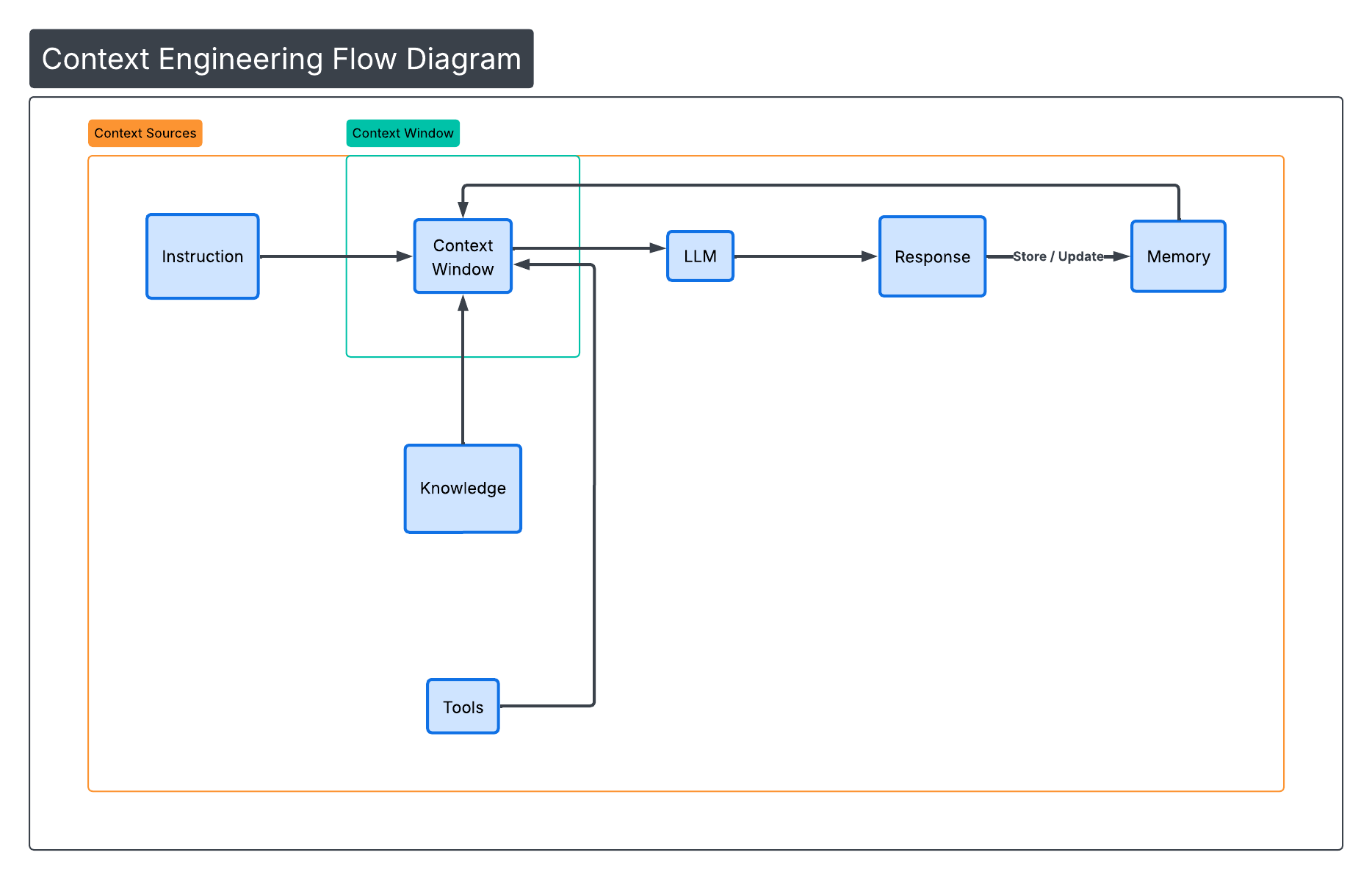

Context Engineering is the practice of designing systems that provide the right information, in the right format, at the right time to help an LLM complete a task.

Context can include:

- System instructions

- User messages

- Retrieved documents

- Tool definitions

- Tool outputs

- Memory

- Skills

- External data

- Messages from other agents

Every LLM has a finite context window (8K, 32K, 128K tokens or more). All the information above must fit inside that window during reasoning.

Our job as AI engineers is to ensure that only the information relevant to the current task occupies that space.

(Figure 1: Context Window Components)

(Figure 1: Context Window Components)

Example Scenario: A Coffee-Making Agent

Imagine we are building a simple coffee-making agent.

The user says:

Make me a cappuccino.

At first glance, this seems simple. But the agent may need to consider:

- Coffee type

- Milk availability

- Machine status

- User preference history

- Mug size

- Temperature preference

- Dietary restrictions

If we dump all of this information into a single prompt, the context window quickly becomes bloated.

Instead, a well-designed system retrieves only what is needed.

That is context engineering.