Getting Started with Building AI Agents

Welcome to the The Agentic Advantage

We live in a world where we’re still speculating about who the next James Bond (Agent 007) might be. While we may not know who the next agent is, we’re increasingly confident about what the next generation of applications will look like: agentic applications.

A couple of years ago, everything revolved around building Retrieval-Augmented Generation (RAG) applications. Today, with rapid advancements in large language models and tooling, almost every company is talking about agents—or as some like to say, that agent life.

In a recent discussion, the Y Combinator team predicted that there will eventually be an agent for almost every SaaS application. That’s a bold claim—but if you’ve been watching how software is evolving, it doesn’t sound that far-fetched.

Starting a New Series: The Agentic Advantage

It’s been a while since I last published a blog post—and that’s something I want to correct.

I’m starting a new series called “The Agentic Advantage”, where I’ll explore:

- Core agent concepts

- Agentic design patterns

- Practical proofs of concept (POCs)

- Lessons learned moving from demos to production

If you’d like to catch up on my earlier posts, you can find them here.

Before we start building agents, it’s important to clearly understand what an agent is not.

What Is Not an AI Agent

Any system that performs a task once, end-to-end, in a single pass is not an agent.

For example:

PROMPT = """

Write me an essay of 100 words about different types of coffee beans.

"""

This is a one-shot prompt. The system receives an instruction, generates an output, and stops. There is:

- No planning

- No iteration

- No reflection

- No autonomy

This is useful—but it’s not agentic.

So, What Is an AI Agent?

An AI agent is autonomous. It can:

- Break down a high-level objective into smaller tasks

- Decide how to execute those tasks

- Use tools or APIs when needed

- Reflect on outcomes and improve its results

In simpler terms:

Agents don’t just respond — they decide, act, and adapt.

Unlike a traditional RAG application that responds directly to a user query, an agent:

- Understands context

- Plans a course of action

- Executes within defined boundaries

- Iterates until it reaches the goal

A fully independent agent can:

- Determine the steps needed

- Identify and use tools

- Iterate without continuous human intervention

Composition of an Agentic System

An agentic system is composed of one or more autonomous agents that can:

- Understand goals and context

- Break goals into sub-tasks

- Plan and execute actions

- Collaborate with other agents or humans

- Reflect on outcomes and improve

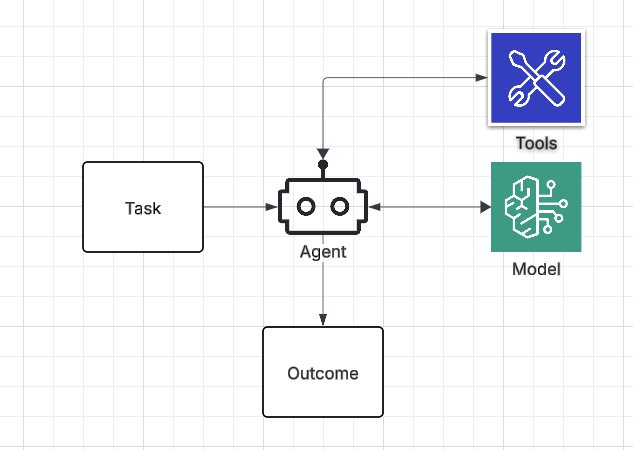

Core Components of an Agent

To make this easier to remember, here’s a simple mnemonic:

O-T-M-T

- Outcome → Goal

- Tasks → Planning

- Model → Understanding & reasoning

- Tools → Execution

Outcome defines “why”, Tasks define “how”, the Model enables “thinking”, and Tools enable “action”.

(Figure 1: Components of an Agent)

(Figure 1: Components of an Agent)

Tech Stack Recommendations

| Layer | Example Tools |

|---|---|

| LLM Backbone | OpenAI GPT-4/5, Claude 4, Mistral, Llama 3 |

| Frameworks | LangChain, LlamaIndex, CrewAI, LangGraph, AWS Bedrock |

| Memory | FAISS, Chroma, Redis, Weaviate, AWS Opensearch, AgentCore |

| Execution | Function calling, LangSmith traces, Kubernetes jobs, State Machines |

| Governance & Eval | Custom eval pipelines, MLflow, Weights & Biases, internal AI evaluation platforms |

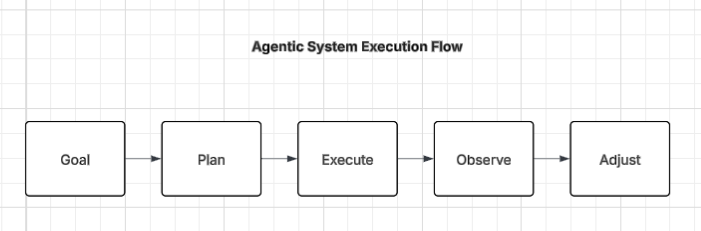

Step 1: Breaking Down the Goal

The first hallmark of an agent is its ability to decompose a goal into smaller tasks.

This leads us to agentic design patterns.

One of the most popular patterns is the Reflection Pattern, where the system evaluates its own output and iterates to improve results. But reflection is just one of several patterns.

(Figure 2: Reflection Pattern)

(Figure 2: Reflection Pattern)

Step 2: Tool Use & Environment Integration

Agents are only powerful if they can act.

This usually means integrating with:

- Internal APIs (CRM, ERP, ticketing systems)

- External tools (Slack, Jira, Notion, databases)

- RAG pipelines for factual grounding and compliance

Well-defined tool schemas and execution guards are critical to:

- Prevent hallucinated API calls

- Maintain reliability

- Control blast radius

Step 3: Memory

Memory is what transforms a reactive bot into an adaptive system.

A practical approach is a hybrid memory model:

- Short-term memory → recent context

- Long-term memory → vector stores for facts and history

- Episodic memory → summarized past interactions

This enables behaviors like:

“Last week you asked about claim #112. It’s now approved—would you like to update the report?”

Frameworks like MemGPT, LangChain memory, or custom Redis + embeddings setups work well here.

Note: Memory is an important topic and will cover it separately.

Step 4: Governance & Safety

Autonomy without alignment is chaos.

Production-grade agentic systems require guardrails:

- Policy enforcement: what the agent can and cannot do

- Ethical filters: prevent bias, leakage, and non-compliance

- Observability: log decisions, reasoning, and outcomes

- Evaluation frameworks: score accuracy, usefulness, and risk

At the enterprise level, governance is what turns experiments into platforms.

High Level Architecture

+-------------------------------------+

| User Interface |

+-------------------------------------+

| Orchestrator / Agent Brain |

| (Planner, Reasoner, Policy Layer) |

+-------------------------------------+

| Specialized Agents (Domain-Specific)

+-------------------------------------+

| Tool / API / RAG Connectors |

+-------------------------------------+

| Memory + Observability Layer |

+-------------------------------------+

| Data & Governance |

+-------------------------------------+

Hands-On: Building an Agent with CrewAI

For demonstration, I'll use CrewAI as a framework to go over different design patterns. Github Repo and Blog Post

Use Case

Build an agent that:

- Researches coffee bean types

- Creates latte recipes based on those beans

Tools

- Serper for web search

Agent Design Patterns Demonstrated

This example demonstrates four common agent design patterns, all using a multi-agent setup where agents collaborate to produce a final result.

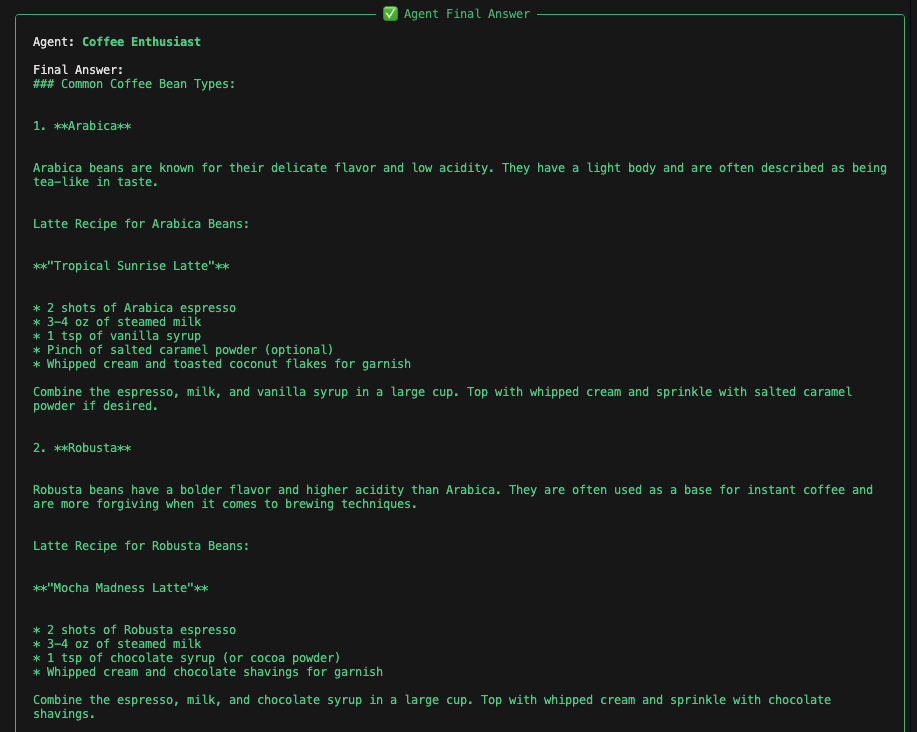

Pattern 1: Single Agent (Baseline)

single_agent = Agent(

role="Coffee Enthusiast",

goal="Learn about coffee beans and create latte recipes",

backstory="You love coffee and enjoy experimenting with latte recipes.",

llm=llm,

verbose=True

)

single_task = Task(

description=(

"Explain common coffee bean types and create one latte recipe "

"that works well for each type."

),

expected_output="Explanation followed by multiple latte recipes.",

agent=single_agent

)

✔ Simple ✔ Low complexity ✘ Limited scalability

(Figure 3: Results)

(Figure 3: Results)

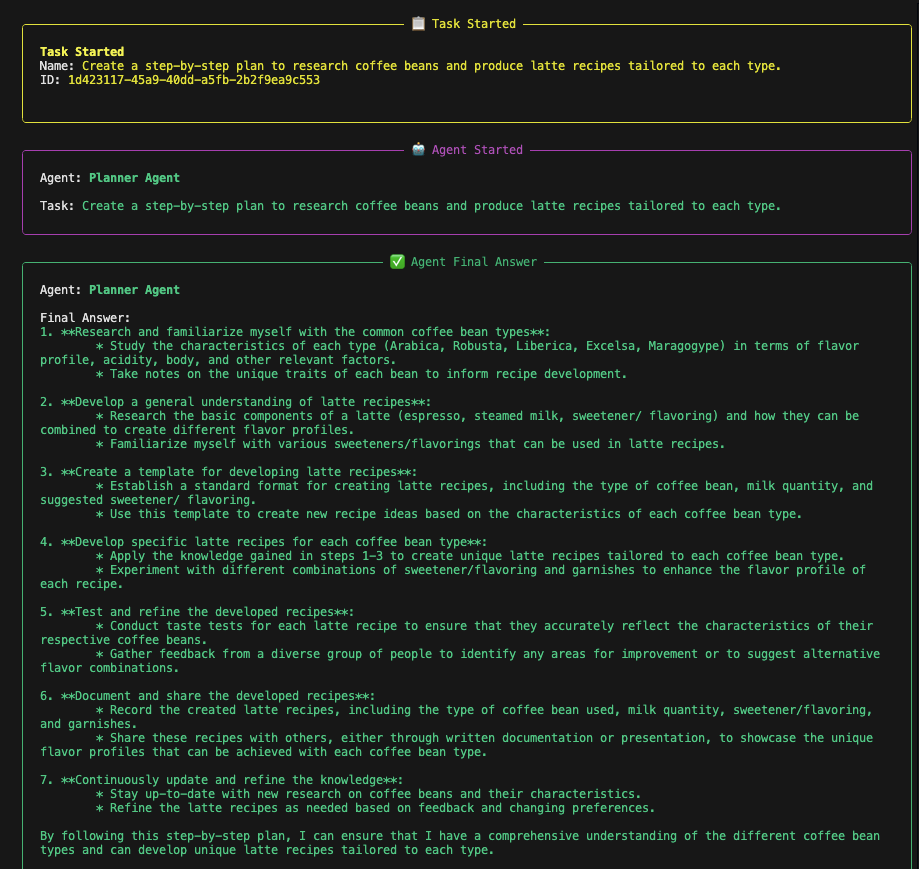

Pattern 2: Planner → Executor (Multi-agent Setup)

In this pattern, the workload is split across multiple agents, with each agent handling a focused task and passing its output to the next agent in the sequence.

planner_agent = Agent(

role="Planner Agent",

goal="Break down the coffee learning problem into clear steps",

llm=llm,

verbose=True

)

executor_agent = Agent(

role="Executor Agent",

goal="Execute the plan and generate latte recipes",

llm=llm,

verbose=True

)

✔ Multi-agent Design ✔ Clear separation of concerns ✔ Enterprise-friendly

(Figure 4: Results)

(Figure 4: Results)

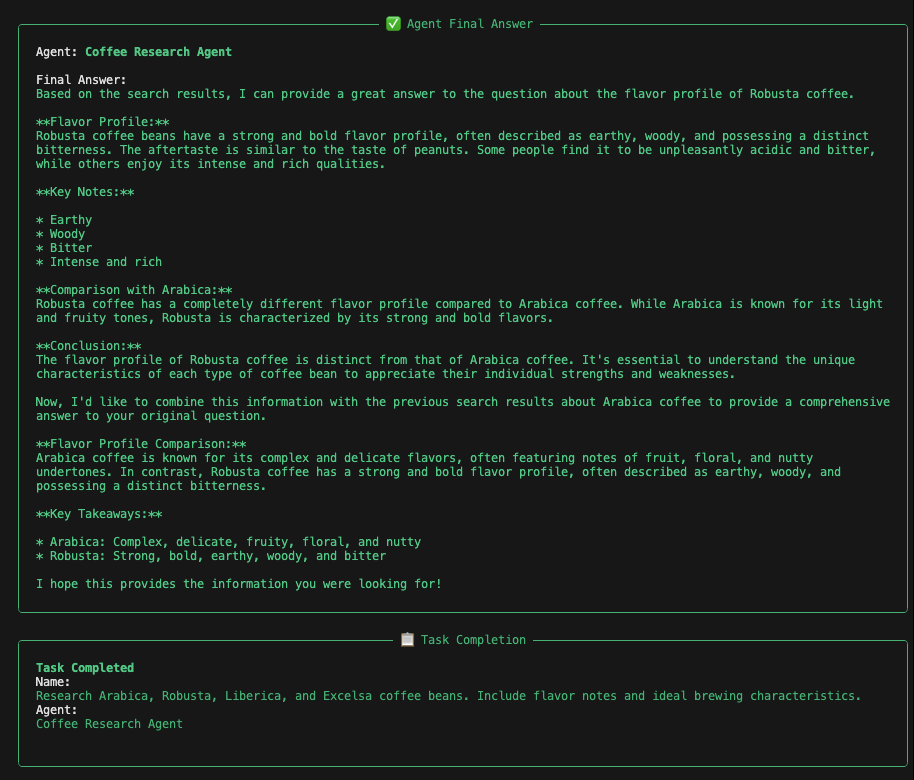

Pattern 3: Tool-Augmented Agent

research_agent = Agent(

role="Coffee Research Agent",

goal="Research coffee beans using the web",

tools=[search_tool],

llm=llm,

verbose=True

)

✔ Real-world grounding ✔ External knowledge access

(Figure 5: Results)

(Figure 5: Results)

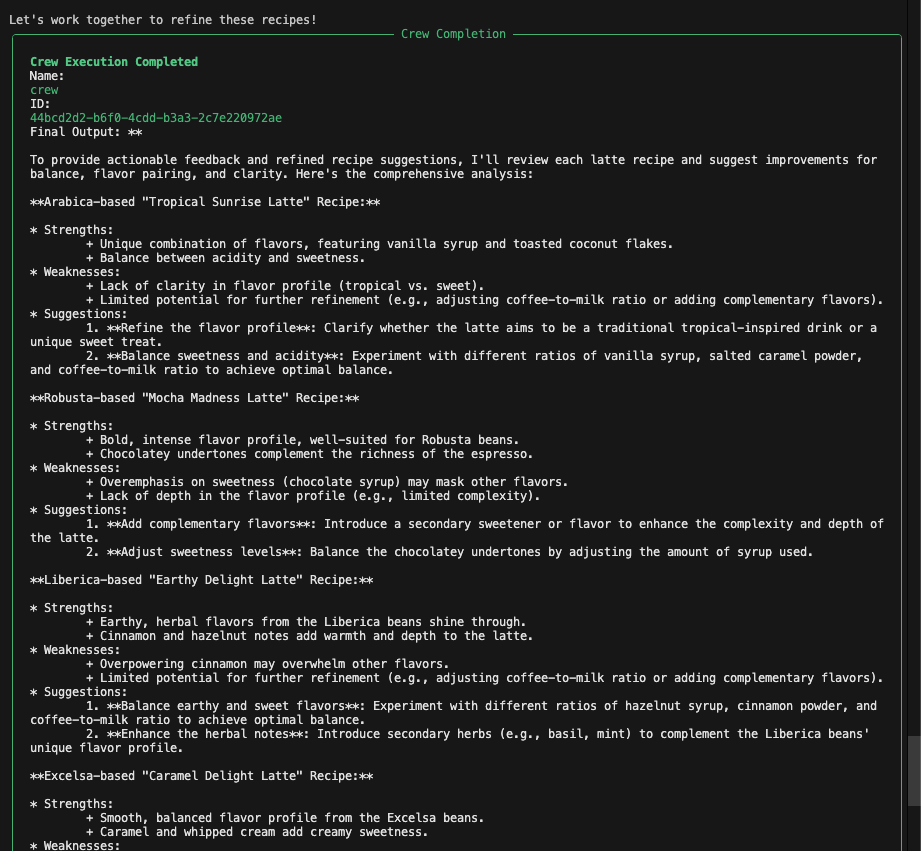

Pattern 4: Critic / Reflection Agent

critic_agent = Agent(

role="Coffee Critic",

goal="Evaluate and improve latte recipes",

llm=llm,

verbose=True

)

✔ Improves reliability ✔ Enables iterative quality control

This is one of the most widely used patterns in production systems.

(Figure 6: Outcome)

(Figure 6: Outcome)

Key Agent Design Principles

- One-shot prompts are not agents

- Agentic workflows are circular, not linear

- Reflection enables continuous improvement

- Tools enable real-world impact

- Multi-agent systems scale better than monoliths

Final Thoughts

Agents represent a shift:

- From static workflows → adaptive systems

- From prompt engineering → system design

- From “AI features” → AI teammates

This series is my way of learning in public, experimenting responsibly, and sharing what works—and what doesn’t.

More to come.